Modern web applications are getting more and more complex. Increased expectations from users and business stakeholders have upped the ante for what a web application should do. It's no longer enough to have a simple site with the information people need. Now, highly interactive pages with real-time or instantaneous responses seem to be the norm.

Today's web applications have lots of moving parts, expanding the area that needs testing. Because of this, end-to-end tests are more important than ever to avoid regressions and ensure things are working well.

Development teams working on these kinds of applications most likely have test coverage for their work. These tests often take shape in the form of unit and functional tests. While these tests are essential, they're not enough to provide confidence that everything's working as expected.

Unit and functional tests usually check parts of the code in isolation. They tell you that a specific part of the code is working as expected. But these pieces often have to interact with other areas of the application. These kinds of tests won't point out if there's an issue with how two or more parts of the system work together.

End-to-end tests help deal with this because they ensure that the entire application is functioning together in peaceful harmony. Not only can end-to-end tests verify that your code is working well, but it can also check that third-party services are working well.

Usually, testing the entirety of an application and any external services are done manually. Teams of developers and testers go through the application and ensure the app as a whole is working as expected.

However, if the application is large or complicated enough - which most applications are these days - this manual testing can take a ton of time to complete. Here is where automation comes into play.

Keep in mind that automation is not an "end-all-to-be-all" approach. Manual testing is a vital part of a healthy testing strategy for any project. Automation cannot and should not cover all possible paths for testing. It should not be the only means of testing - a balanced approach works best.

Automating common paths for an application helps cover repetitive flows for regression testing. It can free up lots of time for testers and allow them to spend their time on other high-value work like exploratory testing.

Choosing a test framework for your end-to-end tests

There are plenty of great tools out there for writing and running end-to-end tests. Depending on your project, your needs, and your budget, you'll have a plethora of options to choose from.

I've experimented with different end-to-end testing tools this year for my organization. Our main goals for choosing a tool were to find something that our developers could pick up, run tests in multiple browsers, and was open-source. The tool I found that checked all the boxes was TestCafe.

During the time using the tool, it's proven to be a great addition to our testing strategy. Here are a few reasons you should consider using TestCafe as your end-to-end testing framework:

- Free and open-source. TestCafe is an actively maintained open-source project that's completely free. The company behind TestCafe, DevExpress, has a test recorder (TestCafe Studio) which is a paid offering, but it's a separate product and not required to use alongside the open-source TestCafe tool.

- It doesn't rely on Selenium WebDriver. Selenium is the de-facto standard when it comes to testing automation for web apps. However, it has its fair share of issues. It lacks some necessary features like automatic waits for dynamic content or needs extra configuration for functionality like mobile browser testing. TestCafe runs its tests via a web proxy, and the tool contains tons of features out of the box.

- Tests are written in JavaScript. If you're building a modern web application, your team is most likely familiar with JavaScript. With TestCafe, your entire team can write and maintain the end-to-end test suite without having to learn a new programming language.

- Lots of built-in functionality. As mentioned, TestCafe has a ton of features ready to use without additional setup. Among the main features it includes are the ability to test with different browsers and configurations, run tests concurrently, and independently manage user roles.

TestCafe isn't the only tool out there with most of these features. Other highly-recommended tools to evaluate are Cypress, Nightwatch.js, and Protractor. Depending on what you need, one of those projects might fit the bill better than TestCafe. Take the time to explore alternatives before choosing a tool.

The rest of this article covers a few examples to get started with TestCafe. It serves as a starting point and to demonstrate how simple it is to write end-to-end tests with the tool.

Getting started with TestCafe

TestCafe uses JavaScript as its primary programming language for writing tests. This article assumes you're familiar with JavaScript. If you're not, I recommend taking a course such as Beginner JavaScript by Wes Bos before proceeding.

(Note: I'm in no way associated with Wes and have not taken this particular course. However, he's known for quality courses and content, and I'm sure Beginner JavaScript is an excellent course for learning the basics of the language.)

Before beginning, TestCafe does have a few prerequisites. Mainly, your development environment must have Node.js and NPM set up before installing TestCafe. If you don't have Node.js installed, download the latest version for your system and install it. NPM is part of Node.js, so there are no extra steps needed.

For the examples in this article, I'll use the Airport Gap application that I built as the place to point the tests we'll cover here. This application was built mainly for helping others practice their API testing skills, but it can also serve to teach the basics of end-to-end testing.

When beginning to create an end-to-end test suite, you have a choice on where to place the tests. You can choose to keep your tests separate or put them alongside the rest of your application's code. There's no right answer - each approach has its pros and cons. For our examples, we'll write the tests in a separate directory, but you can still follow along if it's in the same repo as the rest of your code.

Inside an empty directory, we first begin by creating a package.json file. This file is used by Node.js and NPM to keep track of our project's dependencies and scripts, among other functionality. You can create the file using the command npm init -y. This command creates a basic package.json file that serves as a starting point. Most JavaScript / Node.js projects might need modifications to this file, but we don't need to touch it here.

Next, we'll install TestCafe using NPM. All you need to do is to run the command npm install testcafe. This command downloads TestCafe and any dependencies to the current directory. The official documentation mentions to install TestCafe globally - you can do that if you prefer, but we'll keep TestCafe install in the directory to keep it simple.

You now have TestCafe installed and ready to use - that's all there is to it! With TestCafe set up, we can begin creating tests.

Writing our first test

A basic test to see how TestCafe works is to load a website and check that an element exists. Our first test loads the Airport Gap test site and verifies that the page loaded properly by checking that the page contains specific text.

Start by creating a new file called home_test.js in your test directory. The name doesn't matter, nor does it have to contain the word 'test'. But as you build your test suite, proper file name and organization helps with maintenance in the long haul.

Open the file, and inside we'll write our first test:

import { Selector } from "testcafe";

fixture("Airport Gap Home Page").page(

"https://airportgap-staging.dev-tester.com/"

);

test("Verify home page loads properly", async t => {

const subtitle = Selector("h1").withText(

"An API to fetch and save information about your favorite airports"

);

await t.expect(subtitle.exists).ok();

});Let's break down this test:

import { Selector } from "testcafe": In the first line of our test, we're importing theSelectorfunction provided by TestCafe. This function is one of the main functions you'll use for identifying elements on the current page. You can use theSelectorfunction to get the value of an element, check its current state, and more. Check out the TestCafe documentation for more information.fixture("Airport Gap Home Page"): TestCafe organizes its tests with fixtures. This function, automatically imported when running the test, returns an object used to configure the tests in the file. The object is used to set the URL where the tests begin, run hooks for test initialization and teardown, and set optional metadata. Here, we're setting a descriptive name for the tests to help identify the group during test execution.page("https://airportgap-staging.dev-tester.com/"): Thepagefunction allows you to specify the URL that loads when each test run starts. In our case, we want the test to begin on the Airport Gap home page. In future tests, we can configure our fixtures to start on other pages like the login page.test("Verify home page loads properly", async t => { ... }): Thetestfunction provided by TestCafe is a function that has two main parameters - the name of the test, and anasyncfunction where we'll write our test code. Theasyncfunction from the parameters includes a test controller object, which exposes the TestCafe Test API.const subtitle = Selector("h1").withText(...): Here we're using theSelectorfunction previously mentioned. We're using the function to tell TestCafe to look for anh1element on the page that contains specific text. In this example, this is the subtitle of the page (under the logo). We'll store this select in a variable to use it later in our assertion.await t.expect(subtitle.exists).ok(): Finally, we have our first assertion for the test. This assertion checks that the selector we specified previously exists on the current page using theexistsfunction on the selector. We verify that the test passes with theok()function, which is part of TestCafe's Assertion API.

It's important to note that having an async function for the test allows TestCafe to properly execute its test functions without having to explicitly wait for a page to load or an element to appear. There's a lot more explanation from a technical perspective, but that's out of scope for this article.

That's a lot of explanation, but it's pretty straightforward when you think of it by action - load a page in the browser, find a selector, and check that the selector exists.

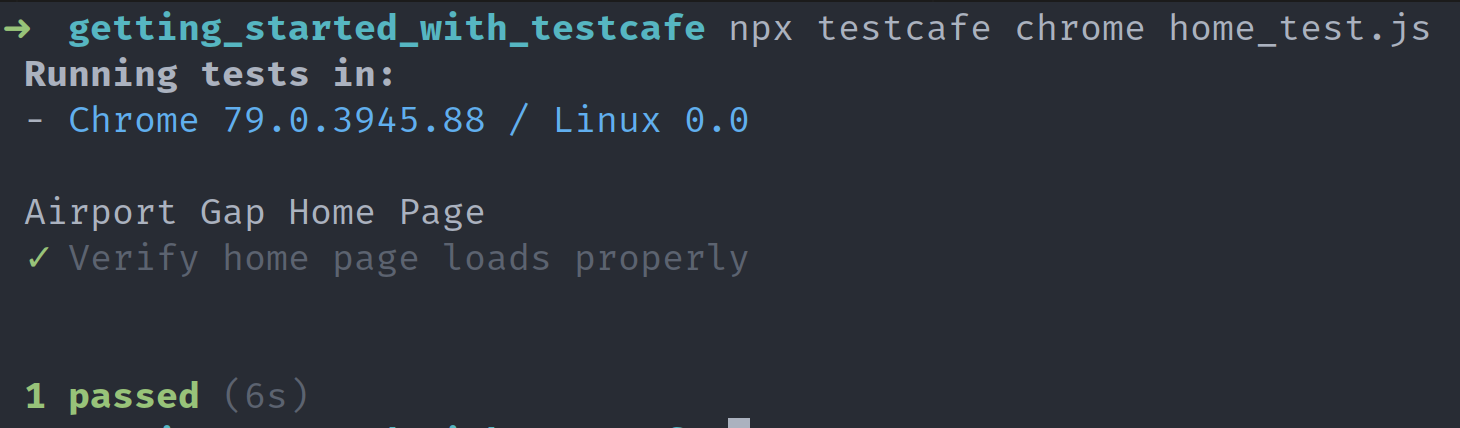

To run the test, we'll use the npx package included in recent versions of NPM. This package executes commands using what's installed either in your development system globally, or installed in the current directory. Since we installed TestCafe in the current directory, npx uses the locally installed version to execute the test commands using the testcafe binary.

The testcafe command requires two parameters. The first parameter is a list of browsers where you want to run your tests. The second parameter is the list of files that contain the tests you want to run.

TestCafe allows you to run the tests on more than one browser concurrently, but for this example, we'll just run it on Google Chrome. Assuming you have Google Chrome installed on your development environment, all you need to do to run the test is use the following command:

npx testcafe chrome home_test.jsWhen executing this command, TestCafe automatically opens Google Chrome and set up the web proxy it uses to run tests. It then goes through the steps from your test. The Airport Gap home page loads and executes the steps inside the test.

Since this is a simple test, you barely see anything happening in the browser. Execution should take a second or two. If everything went well, the results of the test appear:

Success! You have written and executed your first end-to-end test with TestCafe. It's a very simple example, but it serves to verify that TestCafe is working correctly.

Interacting with other elements

Checking that a page is loading and contains specific information is a good start here. However, this kind of test isn't rooted in reality. Using a tool like TestCafe to verify that a page is loading is a bit overkill.

Let's write an end-to-end test that's more useful and reflects real-world situations. For the next example, we'll load up the login page, fill out the form, and check that we logged in by verifying the contents of the page.

We can write this test in the same file as the previous example. But it's good practice to keep tests for different flows separate for maintainability. Create a separate file called login_test.js, and write the test inside:

import { Selector } from "testcafe";

fixture("Airport Gap Login").page(

"https://airportgap-staging.dev-tester.com/login"

);

test("User can log in to their account", async t => {

await t

.typeText("#user_email", "[email protected]")

.typeText("#user_password", "airportgap123")

.click("input[type='submit']");

const accountHeader = Selector("h1").withText(

"Your Account Information"

);

await t.expect(accountHeader.exists).ok();

});This test starts the same way as the previous example. We begin by importing the functions from TestCafe, set up the fixture, and load our URL. Notice this time we'll begin the test from the login page instead of from the home page. Loading the page directly skips having to write extra code to get there.

Inside the test function, things change a bit. This time we're telling TestCafe to select specific form elements and type something in them using the typeText function, as well as click on an element using the click function. Since these actions take place on the same page and usually done in sequence, we can chain the functions together, and TestCafe executes them in order.

The typeText function has two parameters - the selector of the element, and the text you want to type into that element. Note that we're not using the Selector function to indicate which element we want to use for typing any text. If you specify a string as a CSS selector, the typeText function handles that automatically for you.

The click function is similar to the typeText function. It just has a single parameter, which is the selector of the element you want the test to click. Like the typeText function, it's not necessary to use the Selector function - a string with a CSS selector is enough.

The remainder of the test is the same as before - find an h1 element with specific text and run an assertion. It's a simple way to verify that the login flow is working.

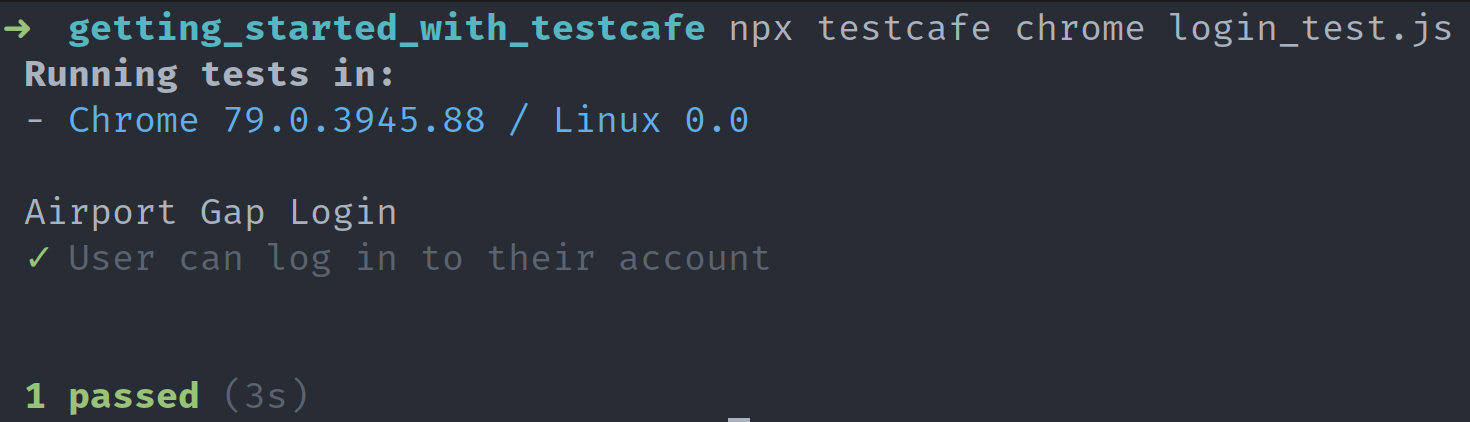

Run the test as before, making sure to use the file name for the new test:

npx testcafe chrome login_test.jsThe Google Chrome browser opens again. For this test, you'll see more activity. TestCafe loads the login page, and you'll see the login form getting filled as if someone was typing it in. TestCafe fills out the email and password fields for a pre-existing user, the "Log In" button is clicked, and the user's account page loads. Finally, TestCafe runs our assertion to check that the specified element exists.

Cleaning up our tests with the Page Model pattern

As you can see, selectors make up a large part of TestCafe tests. It's unsurprising, given that end-to-end tests for web applications typically work this way. You load the site, perform a couple of actions, and verify that the correct result is on the page.

The examples written so far are simple, so it's not a problem keeping these selectors as they are in the tests. However, as your test suite expands and new features get added to your application, these selectors can become a hindrance.

One main issue with writing selectors in your tests as we've done is if you have to use them in multiple places. For instance, if front-end developers change the name of the element or its contents, you'll need to change the selectors in the tests. If you scatter your selectors throughout different tests or even different files, it's painful to go through each one and change them.

Another issue is the possibility of elements becoming more complex as the application grows. Many modern web applications, particularly Progressive Web Apps and single-page apps, generate markup using different methods like service workers. Writing selectors for these elements is tricky, making tests less readable and difficult to follow.

To handle these issues, TestCafe recommends using the Page Model pattern. The Page Model pattern allows you to abstract the selectors from the tests. Instead of writing the selector in the test, you define the selector separately and refer to it when needed. This way, you can keep all of your selectors in one place. If the element changes in the application, you only need to update it in a single place.

It also helps improve the readability of your tests. For example, instead of writing a selector for an input field like input[type='text'], you write a more descriptive name like loginPageModel.emailInput. Anyone reading the test should have a clear idea about that element immediately without having to look it up.

Let's demonstrate how the Page Model pattern helps by updating our existing tests. First, we'll start updating the home page test. We can begin by creating a sub-directory inside our test directory called page_models. The sub-directory is not necessary, but it keeps our test suite tidy. Create a file inside this sub-directory called home_page_model.js. Here, we'll write our model to use in our tests.

In TestCafe, the recommended way to implement the Page Model pattern is to create a JavaScript class. Open the home_page_model.js file and create the following class:

import { Selector } from "testcafe";

class HomePageModel {

constructor() {

this.subtitleHeader = Selector("h1").withText(

"An API to fetch and save information about your favorite airports"

);

}

}

export default new HomePageModel();This code is a plain JavaScript class. Inside the class's constructor, we'll create class properties for each element selector that we want to use. These properties are what we'll access inside our tests, as we'll see soon. Finally, after defining our class, we export a new instance of the class, so it's ready to use in our tests.

If you're unfamiliar with JavaScript classes or are confused about what's happening in this file, I recommend reading more about them on MDN.

Once we create our page model class, let's put it to use in the home page test:

import homePageModel from "./page_models/home_page_model";

fixture("Airport Gap Home Page").page(

"https://airportgap-staging.dev-tester.com/"

);

test("Verify home page loads properly", async t => {

await t.expect(homePageModel.subtitleHeader.exists).ok();

});There was a bit of cleanup that happened in this file. The main change was importing our newly created page model class, creating a new instance set as homePageModel. With this in place, we can access our selectors through the page model's properties. The code where the selector was previously specified is gone, and in its place, we call the selector with homePageModel.subtitleHeader. Since we're no longer calling the Selector function, the import function that we had previously is gone.

Let's implement the same changes in the login test. Inside the page_models sub-directory, create a new file called login_page_model.js. Again, we're using a separate file to separate the page models by page. It keeps things clean and avoids confusing which selector belongs to which page. You can still use the same file as before and write as many selectors as you wish.

Inside login_page_model.js, we'll create a JavaScript class and set the selectors as we did before:

import { Selector } from "testcafe";

class LoginPageModel {

constructor() {

this.emailInput = Selector("#user_email");

this.passwordInput = Selector("#user_password");

this.submitButton = Selector("input[type='submit']");

this.accountHeader = Selector("h1").withText("Your Account Information");

}

}

export default new LoginPageModel();We can now use the new page model in the login test to clean up the selectors:

import loginPageModel from "./page_models/login_page_model";

fixture("Airport Gap Login").page(

"https://airportgap-staging.dev-tester.com/login"

);

test("User can log in to their account", async t => {

await t

.typeText(loginPageModel.emailInput, "[email protected]")

.typeText(loginPageModel.passwordInput, "airportgap123")

.click(loginPageModel.submitButton);

await t.expect(loginPageModel.accountHeader.exists).ok();

});The changes made here are similar to the previous changes. We imported the new page model class and removed the selectors in the test. The properties from the page model replace the selectors. With these changes completed, you can run the tests to ensure that everything runs as before.

For these examples, it may seem like this is extra work. But the benefits of using the Page Model pattern become more evident as you write more tests. As you build your end-to-end test suite that covers more of your web application, having your defined selectors in one place makes your tests manageable. Even with changing a handful of selectors in these tests, you can see that the tests are more readable at a glance.

Summary

This article shows how quickly you can set up TestCafe and have useful end-to-end tests for your project.

You don't have to deal with installing multiple dependencies anymore. All you need is a single command, and you'll have the power of TestCafe at your fingers.

Writing tests is also straightforward. With just two examples in this article, you can see how having end-to-end tests with TestCafe can help check your application's common paths instantly. The tests are simple enough but pack a punch when it comes to running an entire automated flow. These kinds of tests free up your time from repetitive work.

These examples barely scratch the surface of what TestCafe can do. It's a potent tool that has a lot more functionality that's shown here. Some of the more useful functions that weren't covered here are:

- Running your test suite concurrently using different browsers. For instance, you can tell TestCafe to run the tests in Google Chrome and Microsoft Edge at the same time on a Windows PC.

- Running your tests in headless mode when available. Google Chrome and Mozilla Firefox allow you to run TestCafe tests in headless mode, meaning that the browser runs without any UI. This functionality is crucial for running tests on a continuous integration service, where there's no graphical interface.

- Lots of different actions for interacting with your application. Beyond the typing and clicking shown in the examples above, TestCafe can do more interactions like dragging and dropping elements and uploading files.

- TestCafe has many useful ways to debug tests, like taking screenshots and videos, client-side debugging using the browser's developer tools, and pausing tests when they fail so you can interact with the page for investigation.

I'll cover a lot more of TestCafe in future articles here on Dev Tester. Make sure you subscribe to the newsletter, so you continue sharpening your end-to-end testing skills with TestCafe.

Are you interested in learning a specific topic regarding TestCafe or end-to-end testing in general? Leave a comment below!

You can find the code used in this article on GitHub.

Want to boost your automation testing skills?

With the End-to-End Testing with TestCafe book, you'll learn how to use TestCafe to write robust end-to-end tests and improve the quality of your code, boost your confidence in your work, and deliver faster with less bugs.

Enter your email address below to receive the first three chapters of the End-to-End Testing with TestCafe book for free and a discount not available anywhere else.